Will Artificial Intelligence Break Universities?

Not if they elevate formation above information.

Universities are said to be threatened by every new technology. Remember massive open online courses (MOOCs)? Khan Academy, YouTube, and even Wikipedia were also expected to eat into enrollments. Now we have artificial intelligence, which can explain almost any concept on demand and generate impressive essays in seconds. This time really does feel different.

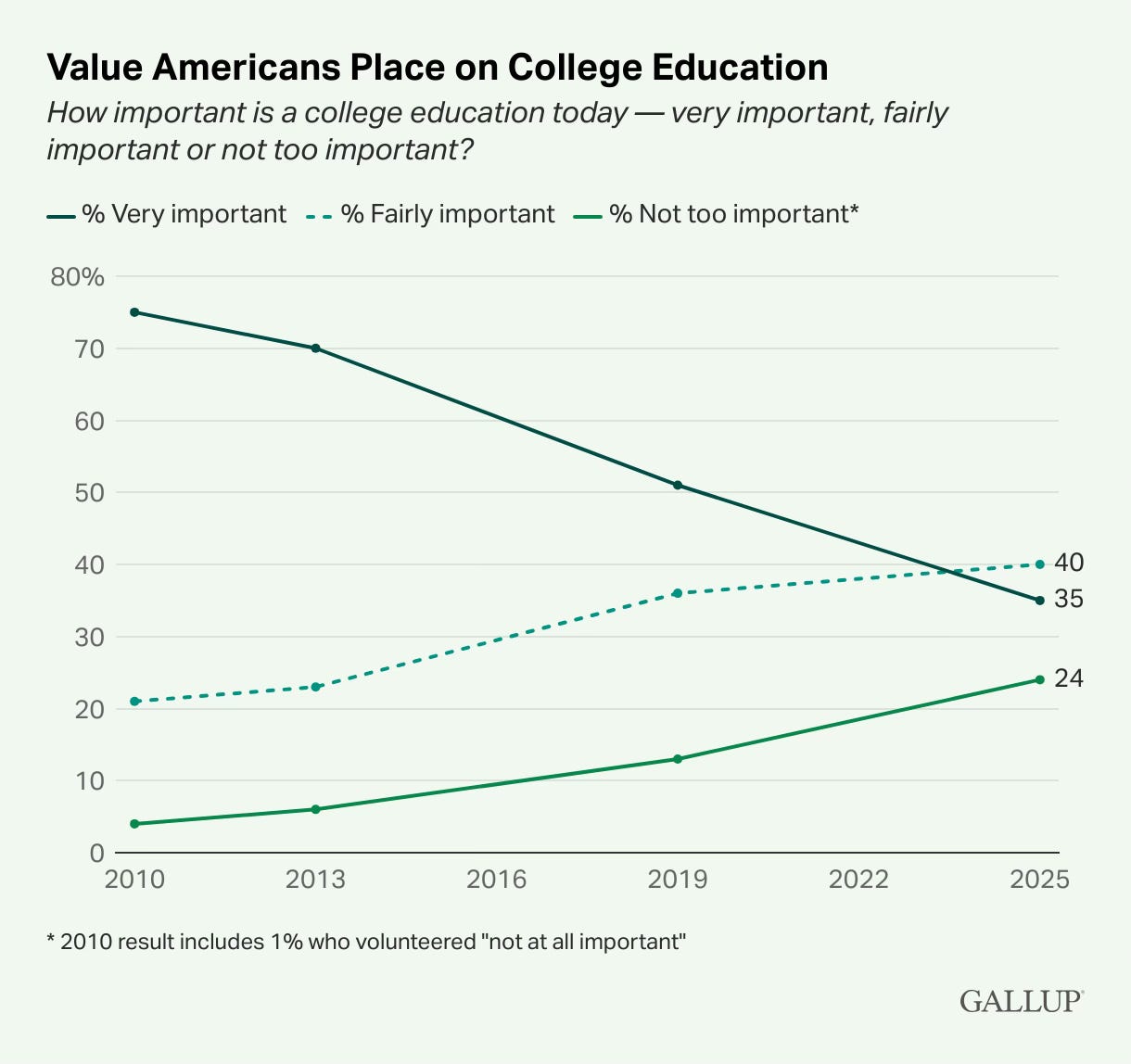

The anxiety around AI arrives at a moment when higher education is already under strain. Well before the Trump administration’s assault on academia, enrollments have been declining at many universities (though not all of them). One reason is that people are just having fewer children, so there aren’t as many high school graduates (the dreaded “demographic cliff”). Young people are also increasingly dissuaded by tuition costs that continue to rise far beyond inflation. And public trust in American higher ed has declined dramatically, especially among Republicans. The concerns are partly about political indoctrination and unfair affirmative action, yes, but also about universities not teaching the right skills that aid in upward mobility. In 2010, roughly 75% of Americans said a college education is “very important,” but just last year that response dropped to an all-time low of 35%.

Against that backdrop, AI looks like the final straw: Why pay tuition when you can be taught how to monetize your YouTube channel for $20 a month by a chatbot who isn’t a Marxist? The uncomfortable truth is that this question has force, but only if universities don’t lean into their traditional value proposition.

For decades, higher education has leaned heavily on a model that primarily treats learning as the absorption of information: watching lectures, doing readings, filling in little bubbles on multiple-choice tests. Many colleges now offer these experiences online, through what used to be called “distance learning” but is now available to all on-campus students, who take such courses out of convenience, not quality. I once overheard a student on campus tell a friend, “I’m not terrible at online classes; I just don’t learn anything in them.”

That model has its advantages of accessibility and scalability, but it was already cracking. Now that easily-available AI tools can generate answers in seconds, this prominent educational model is plainly unsustainable. AI-fueled cheating is making it difficult to discern which students have truly absorbed the information, and more students are doubting whether their degree is worth the cost. AI will increasingly offer a much cheaper alternative that explains ideas faster and more clearly than any stuffy, monotone professor.

But information has never been the heart of education; formation has.

Universities at their best are not places where students merely learn facts, but a community where they practice thinking with others. Students commune with peers and mentors to cultivate curiosity, resilience, and judgment: how to weigh evidence, to avoid groupthink, to disagree respectfully. As Aristotle argued, such virtues are cultivated through habit, feedback, and practice, which emerges best from participation in real social environments, not mere exposure to information or virtual environments.

All this talk of character may seem rather traditional. Do I sound like David Brooks, the conservative writer who keeps extolling the virtues of character-building over career-preparation? Well, Brooks has a point, even if he doesn’t always cheer for same political team as I do. Yet we should avoid a false dichotomy between character and career. The two aren’t mutually exclusive, and it’s not unreasonable for students to care about return-on-investment when they often take on substantial college debt.

Consider what students still get from a serious university education that no algorithm alone can supply. They form sustained relationships with professors who challenge them, mentor them, and eventually vouch for them in letters of recommendation. They are evaluated with assignments that are (virtually) immune to cheating: proctored exams, oral defenses, presentations, live debates. They join clubs, societies, and research groups where their contributions matter to other people, not just to a grade.

These are transformative experiences that promote skills employers consistently report seeking from knowledgeable graduates, such as adaptability, critical thinking, and team work. I’ve seen many students come out of their shells to collaborate with others, improve their clarity of thought, and blossom into more confident speakers. These are common experiences on college campuses, though we don’t always communicate them well or prioritize them over online and algorithmically-mediated instruction.

Fortunately, AI has already pushed many educators to rethink how they teach. Take-home essays no longer mean what they once did. In response, professors like myself are moving toward in-class writing, oral exams, collaborative projects, experiential learning, and assignments that foreground process over output. This shift is often framed as desperate damage control. Yet it can represent a long-overdue alignment between what educators value and what is actually measured.

Still, this adaptation will not be enough if universities merely sell themselves better while continuing to offer an education organized around content consumption. A key challenge is institutional. Rather than create a new Provost of Artificial Intelligence or rush to blow millions partnering with AI companies, let’s trim administrative bloat to ensure that students actually have opportunities to work closely with faculty, to engage seriously with peers, and to practice civic virtues.

That means fewer experiences designed for scale and more designed for interaction. It means classrooms where students are expected to speak, listen, revise, and respond. It means treating education not as a transaction—credits in, credential out—but as a formative process that changes how people see themselves and the world.

Don’t get me wrong; learning facts about math, science, and society are necessary for a quality education. It’s just not sufficient, especially now that generative AI can provide those so efficiently. Sure, AIs can “hallucinate” or make things up, but so can The New York Times. Soon much of society will be proficient at trusting AI outputs only to the degree that they should, fact-checking with some deeper research as needed.

Generative AI will accelerate the erosion of weak educational models but also, sadly, some strong ones. Many small liberal arts colleges geared toward student-faculty interaction just can’t afford to stay in business. (Birmingham just saw one close down in 2024.) Teaching small, in-person classes is expensive. But the other extreme is likewise untenable: large universities of scale that focus on transmitting information to students, often online while they remain at home.

It’s tempting to think this will all balance itself out. Eventually, won’t students and employers realize that AI and YouTube videos give them information without social and critical thinking skills? Aren’t we already appreciating how smartphones and “social” media have hampered the mental health and social skills of a generation, perhaps even of American democracy? AI chatbots may continue to erode social skills and mental health. Then the naysayers will all come crawling back to college! That sounds like wishful thinking, at least if universities prioritize the delivery of information through screens, offering little more of value for a lot more cost.

I’m no AI adversary. Like calculators, computers, and long-form podcasts, generative AI can be a tool that enhances critical thinking and intelligence, even emotional intelligence. But only when used appropriately, at the right times, and in addition to real experiences unmediated by AI. There’s a fine line between enhancing your cognition with ChatGPT and outsourcing it.

Artificial intelligence won’t break universities on its own, but along with lower public confidence it is forcing a reckoning. Universities that respond by doubling down on explaining ideas may suffer. Those that invest in mentorship, cheat-proof evaluation, civic virtues, and shared intellectual life will endure and restore public confidence.

Maybe that’s overconfidence on my part, fueled by a desire to see my profession flourish rather than languish. Even so, weathering the age of AI will depend less on what machines can do than on whether universities recommit to the human work only they can do.

great essay (and great audio!)

AI doesn’t threaten universities because it can explain concepts. It threatens them because higher education already turned itself into a content pipeline. If learning is just information transfer rather than formation in real social contexts, AI simply finishes what the system started. What then breaks is the link between how knowledge is produced and how people actually develop.